Journal Article Review: Autonomous LLM-Driven Research — From Data to Human-Verifiable Research Papers

Ifargan T, Hafner L, Kern M, Alcalay O, Kishony R. Autonomous LLM-driven research — from data to human-verifiable research papers. NEJM AI. 2025;2(1). DOI: 10.1056/AIoa2400555.

BACKGROUND

Artificial intelligence (AI) is revolutionizing scientific research, offering the promise of accelerating discovery through automation. However, questions remain about whether AI can conduct fully autonomous research while adhering to principles like transparency, traceability, and verifiability. This study introduces "data-to-paper," an automation platform enabling large language model (LLM) agents to autonomously produce research papers from annotated datasets. The platform emphasizes transparent workflows and the traceability of results.

What is the relevance?

This study explores the potential of AI-driven platforms to automate research processes in data-rich fields such as epidemiology and biomedicine, where data often surpasses the capacity of human researchers. It addresses the dual challenges of reducing human workload and maintaining scientific rigor, setting new standards for traceability and verifiability.

GENERAL STUDY OVERVIEW

Trial design: Case studies on open-goal and fixed-goal research using annotated datasets.

Objective: Evaluate the capabilities of data-to-paper for hypothesis-driven research and manuscript creation.

Funding: Supported by Technion–Israel Institute of Technology and affiliated collaborators.

METHODS

Platform Capabilities: Guides multiple LLM and rule-based agents through sequential research steps, including hypothesis generation, data analysis, and manuscript creation.

Research Modalities:

Open-Goal: The platform defines the research goals autonomously.

Fixed-Goal: Research goals are predefined by human inputs.

Analysis Steps:

Hypothesis formulation

Literature searches

Statistical analyses with error checks

Scientific manuscript creation

Evaluation Metrics:

Accuracy of results

Novelty of insights

Traceability of research outputs

RESULTS

Participant Flow:

Datasets: Publicly available health indicators, social networks, and SARS-CoV-2 data.

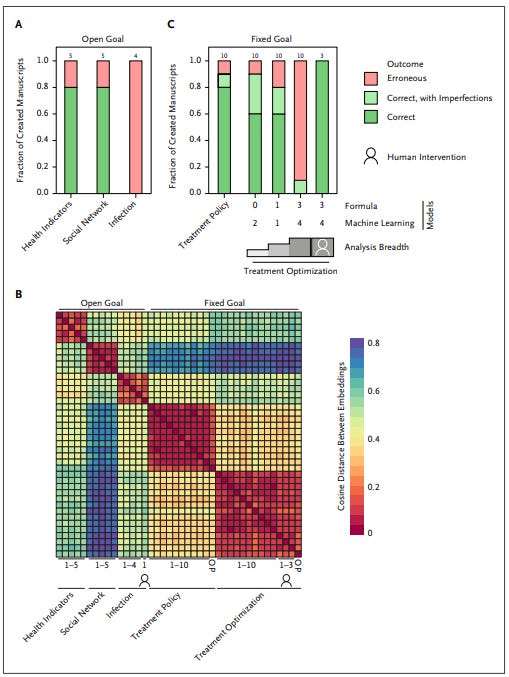

10 manuscripts generated for simple datasets in open-goal mode; additional complex studies conducted with fixed-goal inputs.

Key Outcomes:

Open-Goal: Correct and verifiable insights in 80–90% of cases for simple datasets; errors increased with dataset complexity.

Fixed-Goal: Reliable reproduction of peer-reviewed studies when research goals were provided.

"Data-chained" manuscripts linked results back to upstream data and code for full traceability.

Human copiloting improved accuracy and reliability for complex tasks.

Limitations

Autonomy was error-prone in complex datasets.

Manuscripts lacked high novelty and creativity.

The system was constrained to hypothesis-driven research on existing data.

Human oversight remained critical for ensuring quality.

AUTHORS’ CONCLUSIONS

Data-to-paper successfully demonstrates autonomous AI-driven research capabilities in generating verifiable manuscripts. For simple datasets and research goals, the platform performs with high reliability. However, complex tasks require human copiloting to mitigate errors and enhance quality. The platform's ability to chain data, methods, and results into verifiable manuscripts represents a significant advance in research transparency and traceability.

Presenters’ Conclusion

This study illustrates the transformative potential of AI in automating scientific research. While fully autonomous applications are limited by errors in complex tasks, human-assisted workflows offer practical pathways to enhance research efficiency. The platform's emphasis on transparency and traceability sets a benchmark for future AI-driven research systems. Further developments could integrate real-time hypothesis generation and iterative study refinements, expanding the utility of such systems in diverse scientific fields.